In July, Bing updated its webmaster guide. At Boss Funnel, we have translated all the recommendations that will help you understand how the search engine finds, indexes, and ranks the issue’s sites. You should pay attention to ranking factors and examples of things to avoid when promoting Bing to not fall under spam filters and not fly out of the index.

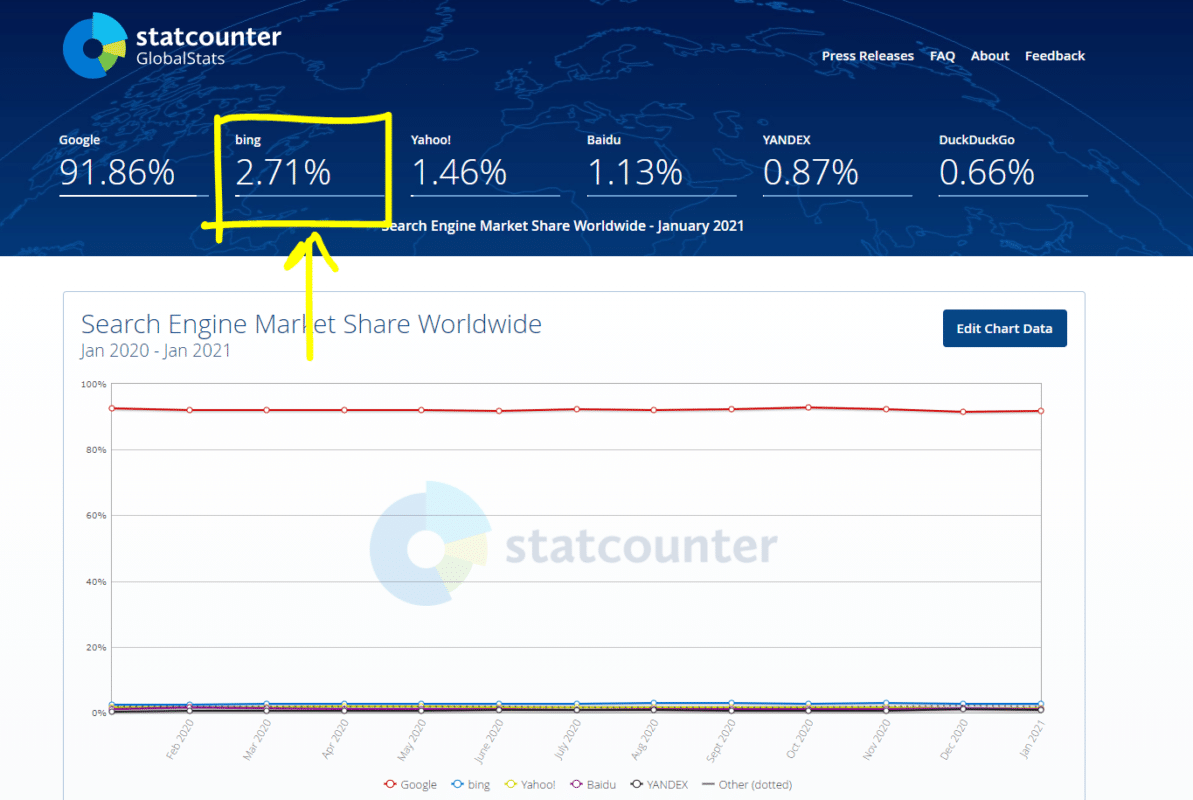

Even if you don’t use bing.com, indexing and ranking principles can help you get a better idea of what today’s search engines are all about. And yet, Bing’s global share is around 3%.

How does Bing index sites?

1. Your Sitemap

Bing recommends using the site’s XML map to help the crawler bypass all the important URLs of your site. It’s also important to keep Sitemap up to date by updating it daily (a dynamic map of the site), deleting old URLs and “dead” links to more non-existent content.

- Include Sitemap in Bing’s webmaster toolbar.

- Make sure the robots.txt also has the address of the site map in the Sitemap directive.

Once Bing has access to the site map, the search engine will scan it regularly, and there will be no need to add an updated version, except for significant changes on the site.

General recommendations for Sitemap:

- Bing supports several site map formats: XML, RSS, mRSS, and Atom 1.0 and a simple text file.

- Bing scans only the addresses of the pages listed in Sitemap.

- Only canonical URLs are required.

- If the site has several versions (HTTP/HTTPS, desktop/mobile version), it is still recommended to specify only one site map. For unique URLs (such as mobile pages), we use an attribute. rel=”alternate”

- If a site supports multiple languages, you should use the attribute either in a site map or in the HTML of individual pages to indicate alternative versions of the URL. hreflang

- To indicate the time of the latest changes on the page, we use the tag. <lastmod>

- The maximum size of the site card’s maximum size is 50,000 URLs / 50 MB (Yandex has the same restrictions). If the project is large, you can break the map into several separate files and specify their addresses in one Sitemap index file (map card).

2. Content Submission API

Bing has the ability to quickly index new content by sending real-time URLs to the API. If the webmaster doesn’t have this option, it’s a good idea to add new/updated pages to Sitemap or the Bing Webmaster Tools console.

3. Links

Traditionally, they signal the popularity of the site. According to Bing, the best way to get them is to create quality and unique content. The Bing robot follows internal (on-site) and external links (from other sites), searching for scanning and indexing content.

Bing recommends setting up an internal relinking so that all pages are connected to at least one page that is available for quick scanning.

- Scanned links are classic with an attribute. Anchors and attributes for image links should be semantically related to the page’s content on which links and pictures are placed.

<a> href alt - Limit the number of links to a page of no more than a few thousand per URL.

- For all paid and/or ad links, you must use attributes (for links posted by users, for example, in comments). This will prohibit Bing’s crawler from tracking them and will not negatively impact the ranking. Google has been supporting these attributes since 2019.

rel="nofollow" rel="sponsored" rel="ugc" - Bing encourages natural links that lead to reliable and relevant sites hosted by content creators and leads real users from their sites to yours.

4. Limit the total number of URLs on the site

The number of pages should be within reason (Bing does not name a specific meaning for understanding “reasonableness”). Avoid duplicate content:

- Use the attribute to indicate the canonical version of the page in different URLs with repetitive content. rel=”canonical”

- Set up PAGE GET settings to improve scanning efficiency and reduce variations of the same URL with the same content. For example, in the Bing webmaster bar, you can set a rule for ignoring URLs.

- Avoid mobile URLs if possible. Bing recommends using the one-page address for both mobile and desktop versions of the site.

5. Use redirects if necessary

If the content has moved, it is recommended to use a 301-redirect, at least 3 months. If the move is temporary (no more than a day), we use 302-redirect. Don’t confuse the rules of declaring the URL’s canonicality with the help and redirect when the right content changes the page. rel="canonical"

6. Set up scanning rules

Bing’s webmaster tools have scanning control functionality for individual Bingbot’s tempo and time settings.

7. JavaScript

The Bing robot is capable of scanning content implemented with JS, but with several limitations. To avoid problems, use dynamic rendering (sends the crawler a static version of the site, and users version of the page with interactive elements on JS). The method is universal and also suitable for Google and Yandex.

8. 404-error

For deleted pages, use HTTP 404. You can also use Bing’s webmaster tools to speed up page de-indexing by sending the URL to delete.

9. Robots.txt

File will tell Bingbot instructions to scan your site. Recommendations from Bing:

- Place robots.txt in the site’s root catalog.

- Using Disallow does not guarantee that the page will not fall into the index and search results. If you want to prevent scanning or index a particular page, you should use a robots meta tag with a noindex directive.

- Check pages that are not scant with Bing Webmaster Tools to control the indexing of the REQUIRED URLs.

10. Data compression in the HTTP protocol

To speed up the scanning and indexing process and loading pages on the user’s side, use the compression of the G’IP and HTTP (getting compressed content between the browser and the server).

Help Bing understand the value of your site

Bing encourages information-rich, useful and interesting content designed for people, not search engines. The recommendations and principles are the same as those of other modern systems.

1. Content

Sites that mostly show ads and/or affiliate links that redirect users to other resources are poorly ranked in Bing. Moreover, in some cases they may not be indexed at all. Content should be easy to navigate and meet the needs of users.

- Create content for users, not search engines. Develop content based on search queries and intent.

- Try to create exhaustive, rich content that fully reveals the topic. But first of all, be guided by relevance, not volume and number of occurrences.

- Content should be unique. If you use material from a third party, use the attribute or indicate alternative versions. rel=”canonical” rel=”alternate”

- Images and videos should be related to the theme of the page. Bing can get information about them through alt and title signatures, structured data, text around elements, and video transcriptions. However:

- Don’t rely on the text on images or videos. Optical character recognition is still less reliable than HTML, so it’s worth filling in attributes. alt

- Use relevant descriptions for file titles, images, and videos.

- The video should be available for scanning and should not be closed under authorization on the site.

- Where possible, use subtitles.

- The quality of the photos can also be important.

- Optimize images and videos to increase page load speeds.

- SafeSearch. Bing uses machine learning to recognize adult content but recommends helping you find adult content with:

- Use. <meta name=”rating” content=”adult”>

- Grouping images into a single folder, such as http://www.example.com/adult/image.png.

- Don’t use Flash and JavaScript for important content, making it difficult to find, scan, and index.

- Content should be easy to navigate and accessible to users with disabilities (screen reading programs).

2. HTML tags

Make sure the HTML elements of the page are descriptive, specific, and accurate. In general, everything is standard here:

- It should be unique to each URL and meaningful enough.

- The name should contain a short description of the page and complement the title in meaning. In most cases, the description is used by Bing to form a snippet in the extradition.

- Bingbot is used as a scanning and indexing instruction.

- It is used to post links. You can use an anchor to navigate a specific part of the page.

- It is used for images. Alt allows you to describe images.

- H1-H6 helps Bing define the structure of the document and understand the content of each paragraph.

- It denotes paragraphs in the text.

- Helps identify the data in the tables. Bing doesn’t recommend using a table to tmble the layout of the page.

- The semantic elements of HTML5 also have value for the search engine. You can use tags: (lt) and zlt’s details, zlt’s, zlt’decing, zlt’gt., zlt’footer, zlt’header, zlt’zgt, zlt’gt, zlt’mark,’

- It’s recommended to check HTML with Bing SEO Analyzer and W3 Validator.

Separately, Bing recommends checking page display support in the Microsoft Edge browser. Content should be properly drawn and read when downloading a document – no pop-up. Remember that Microsoft owns Bing.

Also, Bingbot should have access to JavaScript and CSS files needed to display the page properly. It’s a good idea to minimize the number of HTTP queries to render content on JS.

Bing supports semantic markings: Schema.org, RDFa or OpenGraph. Preferably the rest – Schema.org in the format of JSON-LD or Microdata.

How does Bing Rank Content?

The Bing issue results are generated using their own algorithm, which compares search queries and documents in the index system. There is no need to improve positions using paid methods, but contextual advertising is present and configured with Microsoft Advertising. Bing does not prioritize Microsoft products in organic search results but may highlight their advertising banners relevant to the request.

Here are the key options used to evaluate and rank organic results. The relative importance of each can vary depending on the search query and varies from time to time:

- Relevance. Determined by matching the content of the page to a search query. It is defined as the entry of key phrases in the document’s text and the references’ anchors. Bing considers semantic conformity, synonyms, and abbreviations that are not included in the search query but have to do with it.

- The quality and authenticity. This parameter is influenced by the credibility of the resource, the level of discourse (for example, articles with sources of facts and quotations). Bing can pessimize sites that post offensive or derogatory content.

- User engagement. i.e., behavioral factors showing how people interact with the site. By determining overall interest, Bing considers CTR, session time, return to search results, and reformulates the request after visiting sites from the issuance.

- Relevance. Bing generally prefers fresher content but considers the topic and the possibility of a rapid loss of relevance of materials (probably news content).

- Page load time. Bing considers the site’s speed as part of the user experience assessment but encourages webmasters to find the right balance between speed and usability.

Characteristics of goods or services on the site are not ranking factors, except when they may be potentially harmful or offensive (suicide propaganda, adult, drug sales, etc.). There is a difference from other search engines. In particular, Yandex analyzes the price of goods and includes it as one factor in the commercial ranking.

What should be avoided when moving to Bing?

Bing recognizes SEO as a legitimate and positive practice to improve the technical and content aspects of the site that facilitate search and increase accessibility for search engines and users. However, Bing warns that SEO jobs do not guarantee high positions and increased traffic.

Bing cites some of the common forms of abuse in the webmaster’s manual, manipulative and misleading content. There can be both a pessimism in the issuance and a complete exclusion from the index in the form of sanctions. The list is not limited to the examples. If you think that sanctions are unfair, you can always turn to Bing Webmaster Tools. Similarly, regular users can report any abuse in support of Bing.

- Cloaking. The practice of showing content to users is different from what is displayed for search robots. Bing rather harshly pursues such methods, is regarded as spam, and can exclude the entire site’s index.

- Manipulating the reference mass. It may also result in an exception to the Bing index. Links should be natural.

- Schemes using social networks. As in reference spam, artificially created social activity entails sanctions in the search engine and, according to Bing’s assurances, is easily detected (mass falling and auto-subscription).

- Content duplication. In the case of mass duplicate content, Bing will lose credibility in the domain, and the mass use of rel'”canonical” for the same content should be used as a secondary solution. First of all, Bing recommends setting up rules for ignoring URL settings in your console to avoid duplicate problems.

- Copying content can be considered a copyright infringement and can cause pessimism even with small original changes. Bing recommends either adding original content and added value or creating a new one entirely.

- Re-optimization with key phrases also entails downgrading positions or deleting from the index. Content should be optimized for queries but users.

- Auto-generating content. As a rule, automatically generated content is considered malicious and leads to fines.

- Affiliates. Sites masquerading as independent sites designed to promote services from other sites (Amazon, eBay, etc.) and do not offer additional value to users can also be pressurized and deindexed.

- Malware. When creating content and managing websites, you need to make sure that the webmaster is not involved in phishing, spreading malicious software, viruses, etc. Sanctions are a downgrade in the search and removal from the index.

- Misleading markup. Structured data must be strictly consistent with the content published and the overall theme of the page.

CASE-STUDY: How I Managed to Re-Index My Website on Bing

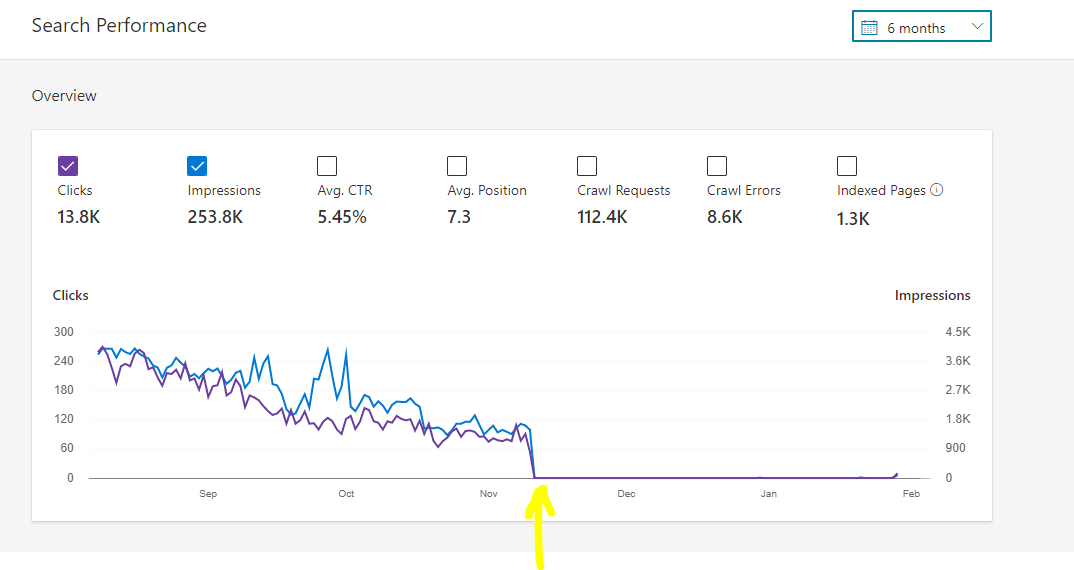

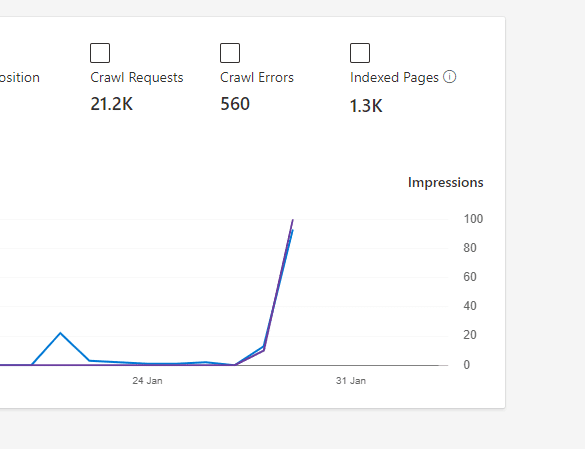

Here is a snapshot of one of my websites that got de-index on November 10, 2020.

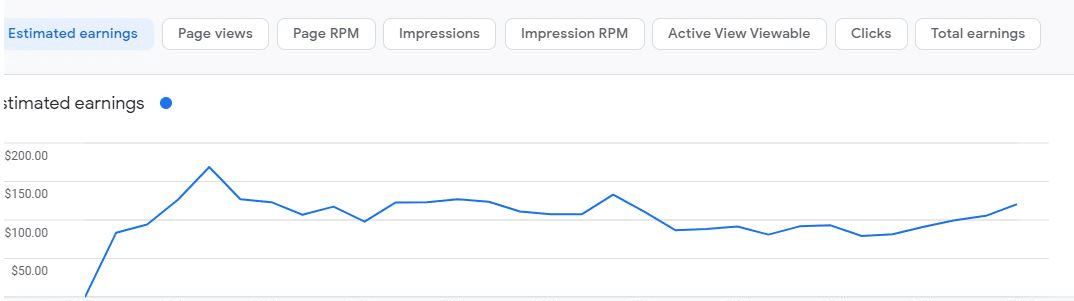

If you are reading this post, I am sure you are a victim of Bing (so was I). Initially, I never paid attention to Bing. It was in November 2020, when I started seeing a major downfall of my website’s traffic. I digged deeper, and I finally realised that my website was completely de-indexed from Bing, resulting in downfall of my web traffic and AdSense income.

My Website Got Deindexed From Bing: What were the reasons?

I had no idea why my website got deindexed from Bing completely. I did try to Google, but unfortunately- I HAD NO ANSWER! Since my website got de-indexed in November, I tried to look for the changes I made during that period of time.

1. Design Changes and Several 404 Errors

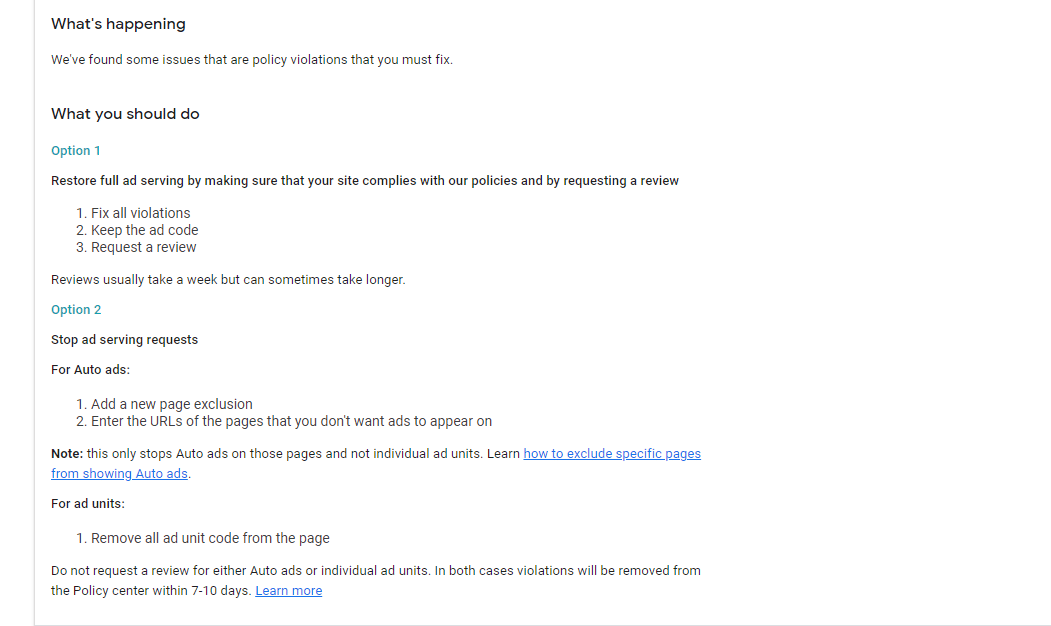

It was in initial November 2020, I got a policy violation on my AdSense account.

The major issue for the policy violation was due to unlimited number of download links of my posts and my website template.

Due to these policy violations, I had to remove many of my posts that resulted in 404 Errors on my website, and I had to revamp my overall website design. When I ended up changing my website’s design, it was then when my website got de-indexed from Bing.

BING IS STRICT: The don’t like too many changes to be happening at once.

2. Duplicate Content

Duplicate Content was one of the major issues that my website was deindexed from Bing. I had several posts ranking on similar keywords. I never used a ref=canonical element to specify duplicate content on my website and thus ended up.

Bing has clearly specified their rules with duplicate content, which I did not follow.

3. Keyword Stuffing

Keyword Stuffing was another major reason that led my website to get Deindexed From Bing.

I tried a few black hat techniques to rank my website. I was unnecessarily stuffing keywords to get more exposure. I was also making PBN backlinks to rank my website. BING HATES PBN.

4. Content Automation

Bing doesnt allow websites running up on automation. I was carelessly automating news content on my website via RSS feeds of high-authority websites. BING KICKED ME HARD.

Content Automation is something that must be done smartly. If you are fetching content from other sources, you need to follow a set of guidelines or use artificial intelligence to make the content original. THIS IS SOMETHING I WOULD NOT LIKE TO DISCUSS HERE.

Later on, I improved my methods of automation by using a set of tools and for now, its doing great!

5. Backlinks

Another possible reason for sites being deindexed from Bing is the use of unnatural backlinks on your websites. This will include PBNs, bulk creation of backlinks using tools, etc.

How I Managed to Index my Website on Bing: Steps I Followed

My website got deindexed in November, and like I mentioned- I never noticed Bing. I realized this in early January and started with my research. Here are the things I did before requesting Bing Support.

- Fixed my website structure and removed all the 404 links.

- Removed all the duplicate content.

- Strengthened my content automation- the content posted is no more duplicate.

- Removed the old sitemap and created a fresh one and submitted on Bing.

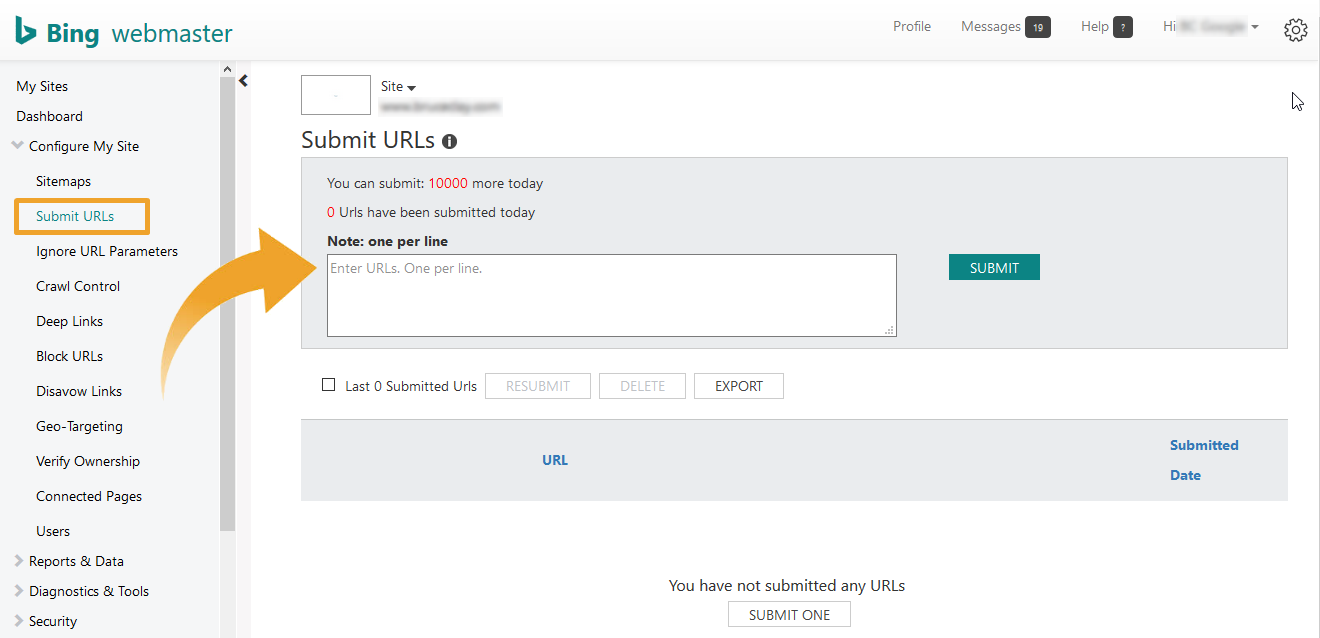

- Manually submission of crawl request to my top ranking pages.

After fixing all these issues. I submitted a request on BING SUPPORT.

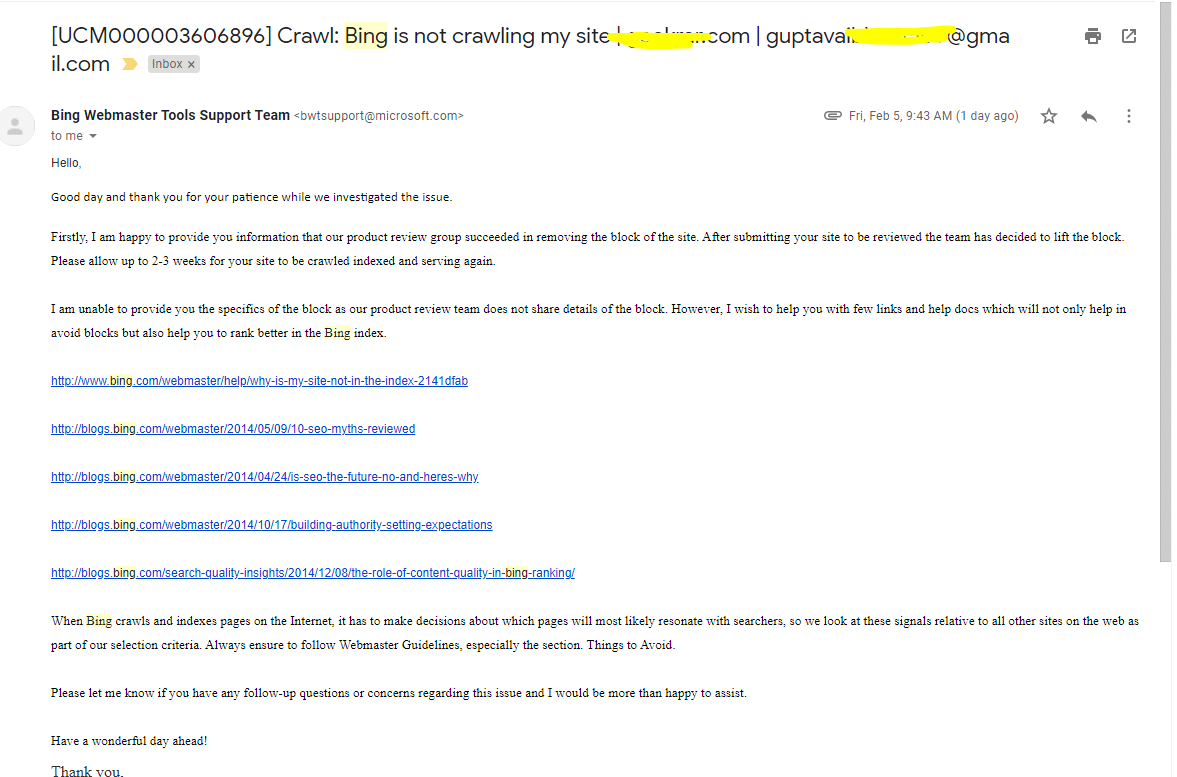

I mailed them on 23rd January and I got this reply after few weeks.

On February 5, 2021. I got the email from the support that my website block has been removed and that my site is reindexed.

This case study is based on my experience with bringing back my site on Bing. I did managed to fix my AdSense policy violation with a professional website design and content modification and also my Bing webmaster issue.

Feel free to share your experience and other tips for fellow bloggers below.

20 Comments

Hello Sir!

I read your article about bing’s blocked site. After email support, you got back on bing SERP.

However, in my case when i contact with bing customer support they sent the message:

Note: my website does not belong to porn, gambling, or any misleading content. it is working fine on google.

Thank you for your patience during our investigation. After further review, it appears that your site had issues with meeting the standards set by Bing to remain indexed the last time it was crawled. To ensure that this was not a false flag, I also escalated the issue to the next level and they manually reviewed your site and confirmed that it is in violation of our Webmaster Guidelines detailed here:

https://www.bing.com/webmaster/help/webmaster-guidelines-30fba23a.

We are not able to provide specifics for these types of issues because the relevant teams do not share reports with us. but we recommend that you review our Webmaster Guidelines, especially the section Things to Avoid, and thoroughly check your site for any deliberately or accidentally employed SEO techniques that may have adversely affected your standing in Bing and Bing-powered search results. We will not be able to add your site to the index while Bingbot is finding it in violation of Webmaster Guidelines. We have no control over this process and you will need to make quality changes to your site and wait for Bingbot’s automated crawling process to agree that the site passes Webmaster standards before it can be served.

Also, I spent some more time reviewing your site and was able to find a few links and docs which will help you in this scenario.

Please help me!

Can you please share your website URL?

Did you make any recent changes to your site? Added content in bulk? Removed content in bulk? Changed the theme? Build bulk backlinks? Or anything unusual?

No, Everything working fine. Even I didn’t get any error in bing webmaster. When I sent mail to them, then they replied your website has blocked by bing.

I cannot public my Site URL.

Please drop an email at admin@bossfunnel.com

Hello thanks for sharing this wonderful post, my website was deindexed recently after changing a theme, I contacted the support team they said my site is in their spam list that they need to investigate further. I’m still waiting. Please any alternative?

You can wait for their reply. I’m sure they will get this fixed for you in some time. 🙂

You can also ask them for specific reasons as well. (For deindexing your website)

My site was de-indexed in December 2020 after I made some redirections. I have removed the redirections and mailed big support. I got a reply that my site does not meet the equipment to remain indexed. I further contacted bing support and I got a reply to resign my site and remove any duplicate content. My problem is, I don’t know how I will redesign my site and find out duplicate content or copyright content. I need your help on my site myfreshgists.com

Well, your website is not user-friendly at all. You can install any premium theme like contentberg and give it a professional look.

For finding the duplicate content you can use tool like quetext.com

Let me know if you need any help.

I Just got a reply from Bing

Hope you are doing well!

Thank you for your patience during our investigation. After further review, it appears that your site did not meet the standards set by Bing to remain indexed the last time it was crawled. To ensure that this was not a false flag, I also escalated the issue to our Product Team and they manually reviewed your site and confirmed that it is in violation of our Webmaster Guidelines detailed here: https://www.bing.com/webmaster/help/webmaster-guidelines-30fba23a.

We are not able to provide specifics for these types of issues but we recommend that you review our Webmaster Guidelines, especially the section Things to Avoid, and thoroughly check your site for any deliberately or accidentally employed SEO techniques that may have adversely affected your standing in Bing and Bing-powered search results.

Hi there, my site droidtheory.com is also deindexed from bing. I created some backlinks, I don’t know what is the reason for that. Can you help in getting my website back.

Did you use any online tools or spam methods to create those backlinks?

Did you get in touch with the Bing team and ask them for specifics?

I contacted them several times, but there is no solution. I’ve now not created any backlinks from almost 2 years. but still there is no solution for this issue.

Hello!

I have read your helpful article. Fixed all the problems that were written on the Bing page. However, the support service still writes to me that my site does not conform to Microsoft Bing recommendations. What do I do in this case?

Did you try to ask them any specifics? They do inform you about what changes are required.

I experienced a similar thing, currently trying the tips from this article, I hope Bing restores my web index

Did the tips work?

Hello Vaibhav

Nice Article,

Can you tell me how you handled and resolved the situation of the content automation stuffs?

I would suggest you limit the automation to an extent and not force an unlimited number of URLs to index in the day. That would solve it.

I have created a new website and indexed by the google instantly but when I try to get indexed by the Bing Search engine after trying two months bing not indexing my site. Now I will try your tips and tricks I hope now Bing Will index my website.

Did it work?